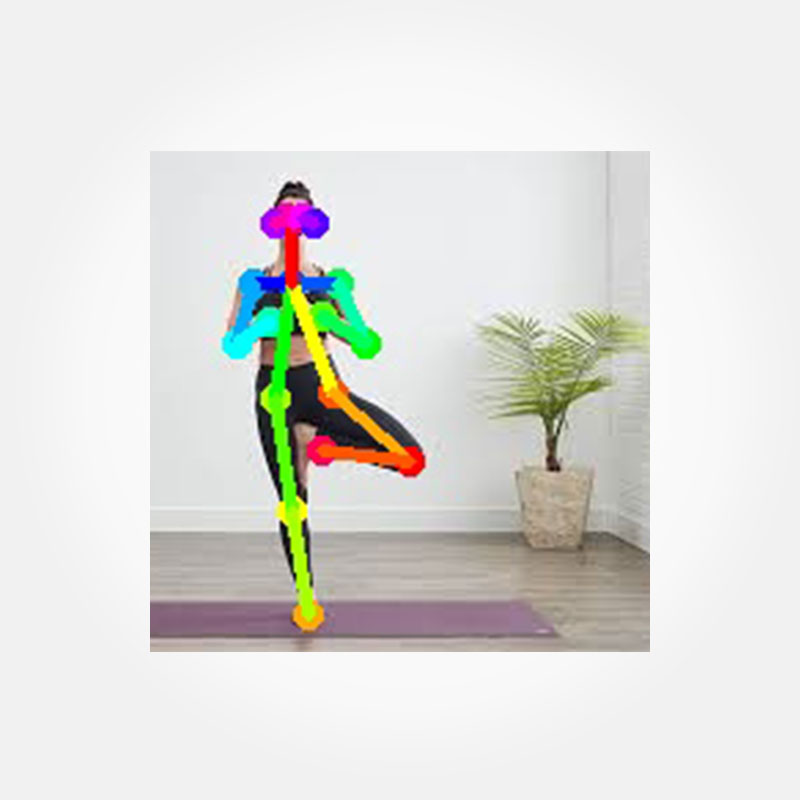

Pose Detection

Pose detection is classifying and detecting the

joints in the human body. Pose detection is the computer vision task that

includes associating, detecting, and semantic Key Points. It captures a set of

coordinates for each joint, known as a Key Point that explains the pose of a

person.

The connection between these points is known as a

pair. Computers can learn to read human body language by performing pose

identification and tracking.

Human pose estimation is challenging due to

various factors like diverse forms of clothes, arbitrary occlusion, diverse

angles, and background contexts.

Pose estimation operates by finding key points of

a person or object. Taking a person, for example, the key points would be

joints like the elbow, knees, wrists, etc. There are two types of pose

estimation: multi-pose and single pose. Single pose estimation is used to

estimate the poses of a single object in a given scene, while multi-pose

estimation is used when detecting poses for multiple objects.

Contact Information

0333 1508984

info@alphacoder.com.pk

business@alphacoder.com.pk

I-8 Markaz Islamabad